jmtd → Jonathan Dowland's Weblog

Below are the five most recent posts in my weblog.

- HMS Blueberry, posted on

- iPad Mini (2013), posted on

- nvim-µwiki, posted on

- Digital gardening, posted on

- Ladytron, posted on

You can also see a reverse-chronological list of all posts, dating back to 1999.

Royals are my favourite ships in No Man's Sky. The HMS Blueberry is not my first Exotic/Royal ship (that was the Gravity Hirakao XVI, and a story for another time).

After years of on-off playing, I recently found my first Royal multitool: Blue, with gold detailing. I have a Royal-style jetpack (I don't remember where I got that). I thought I'd try and colour-match my multitool, ship, jetpack and outfit. Since I only had one multitool, I matched the others to it. And the HMS Blueberry (credit for the name goes to Beatrice) was the Exotic in my collection which matched.

The HMS Blueberry is in viewable in my showroom, Honest Jon's Lightly-Used Starships.

In or around 2014 I bought an iPad Mini (2), and following the normal lifecycle of iOS devices, a major OS update eventually killed it as a useful, general-purpose device: operating it was just too sluggish. It remained useful as a streaming media player for a little while longer until eventually the big streamers (BBC iPlayer, Netflix, etc.) stopped supporting the version of their app which the iPad could install: the last officially supported iOS was 12.4.8 in July 2020, and by November it was officially dead.

During its useful life, the iPad Mini witnessed Apple's transition from 32 to 64 bit apps. In the 32 bit days, there was a little cottage industry of app developers, and in particular, game developers. There were even several independent websites (App Shopper, Pod Gamer, Free-App Hero), which aided in sorting through the morass of apps to find the good ones (then as now, the App Store itself was almost impossible to effectively browse). This all went away during the 32/64 transition, as many small-scale developers weren't actively developing their applications or games any more, and weren't prepared to pay the time or apple tax to rebuild and publish them as 64 bit.

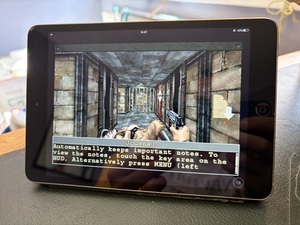

The last version of iOS that supported 32 bit apps on this device was 10.3.3, and by luck, there are some methods available to install this old version of iOS on the Mini 2 Today. A couple of years ago I did so, and I kept no notes so sadly I can't report on which method I used. But it worked, and I was able to install a bunch of old 32 bit games that I had no access to on more modern devices.

Prior to John Carmack's1 departure from iD Software, he'd been responsible for publishing several experimental iD software games on iOS. These mostly disappeared in the 64 bit transition. Amongst them are ports of Wolfenstein 3D, classic Doom, some RAGE tie-ins, but perhaps most interestingly. at least two original games, designed for the phone form factor: Doom 2 RPG and Wolfenstein RPG.

Another notable game that disappeared was "Civilisation Revolution", a cut-down Civ game that for a while I was obsessed with. Rather than port it to 64 bit, the publisher withdrew it, and then published a "new" game "Civilisation Revolution 2", requiring a separate purchase. Sadly, it is rubbish, nowhere near as good as the first one.

Anyway, having managed to downgrade it to the 32 bit iOS and install these old lost games, I then, of course, never played them and the device continued to gather dust. I should make clear that, running such an old unpatched iOS version means it's not safe at all to put any kind of sensitive information on this, including entering passwords. I don't recommend even opening the web browser. However, this 12 year old device does have some use as an e-reader, especially for certain types of ebook or magazine, that I've struggled to engage with on other devices. That's a topic for another blog post.

- Carmack reportedly also had a pivotal role in convincing Steve Jobs to permit native apps and provide an App Store on iOS: the plan had been to solely support web apps, at least for 3rd parties.↩

In January 2025, as a pre-requisite for something else, I published a minimal neovim plugin called nvim-µwiki. It's essentially just the features from vimwiki that I regularly use, which is a small fraction them. I forgot to blog about it. I recently dusted it off and cleaned it up. You can find it here, along with a longer list of its features and how to configure it: https://github.com/jmtd/nvim-microwiki

I had a couple of design goals. I didn't want to define a new filetype,

so this is designed to work with the existing markdown one. I'm

using neovim, so I wanted to leverage some of its features: this plugin

is written in Lua, rather than vimscript. I use the parse trees

provided by TreeSitter to navigate the structure of a document.

I also decided to "plug into" the existing tag stack navigation, rather

than define another dimension of navigation (along with buffers, etc.)

to track: Following a wiki-link pushes onto the tag stack, just as if

you followed a tag.

This was my first serious bit of Lua programming, as well as my first dive into neovim (or even vim) internals. Lua is quite reasonable. Most of the vim and neovim architecture is reasonable. The emerging conventions about structuring neovim plugins are mostly reasonable. TreeSitter is, well, interesting, but the devil is very much in the details. Somehow all together the experience for me was largely just frustrating, and I didn't really enjoy writing it.

I was reading a post on Alex Chan's website1 that referenced the concept of digital gardens, a concept/analogy for organising information which dates back to the 90s. This old concept is getting new traction today by contrasting the approach with "endless stream" as used and abused by social media, but also how blogs are typically presented.

This site, my homepage, has a blog, and that's the bit that most people who interact with the site will experience. Partly, because it's the bit that gets syndicated out: via feeds; on Planet Debian and downstream from it; once upon a time on Twitter; nowadays on the Fediverse.

However there's more to my homepage than that. The rest of it may be of little interest to anyone beside me, but it's useful to me, at least. So I may switch focus a little bit from mainly writing blog posts, and tend to the rest of the garden a bit more.

Some recent seeding and pruning: Recently my guest status at Newcastle University came up for renewal, so I wrote down my goals in the Historic Computing Committee for the next year or so, and put them here: nuhcc. I've also been pondering what I'm up to in Debian at the moment, so took some time to add my current projects to that page.

- I'm reminded that I should really publish a "blog roll" of cool blogs I'm following at the moment, of which Alex Chan's is one.↩

I saw Ladytron perform in Digital, Newcastle last night. The last time I saw them was, I think, at the same venue, 18 years ago. Time flies!

Back in the day (perhaps their heyday, perhaps not!) Ladytron ploughed a particular sonic furrow and did it very well. Going into the gig I had set my expectations that, should they play just these hits, I'd have a good time.

The gig exceeded my expectations. The setlist very much did not lean into their best-known period: the more recent few albums were very well represented and to me this felt very confident. The lead singer, Helen Marnie, demonstrated some excellent range, particularly on some of the new songs. Daniel Hunt did a lot of backing vocals and they were really complementary to Helen's: underscoring but not overpowering. I enjoyed nerding out watching Mira Ayoro's excellent wrangling of her Korg MS-20. One highlight was an encore performance of Light & Magic, which was arguably the "alternate version" as available on the expanded versions of that album or the Remixed and Rare companion.

I thought I'd try to put together a 5-track playlist for a friend who attended the gig but isn't super familiar with them. As usual this is hard. I'm going to avoid the obvious hits, try to represent their whole career and try to ensure the current trio each get a vocal turn in the selection.

They actually released their latest album, Paradises, yesterday as well. One track from it is in the list below.

(If you can't see anything, the bandcamp embeds have been stripped out by whatever you are viewing this with)

Older posts are available on the all posts page.